AI had one heck of a year in 2024 – from jaw-dropping advancements to game-changing regulations, it felt like the world was getting a crash course in the future. It’s been a wild ride for industries, governments, and global partnerships.

We know it’s been a minute since you last heard from us. Life got busy (as it does), but OnlineFuss is back and ready to make it up to you! To kick things off, we’re wrapping up the biggest AI stories of 2024, month by month. And trust us, 2025 is already shaping up to be just as exciting – so stick around because we’re bringing all the latest right here.

January 2024

In January 2024, Microsoft dropped some big news – their AI assistant, Copilot, officially became part of the Microsoft 365 subscription for folks in Australia and Southeast Asia. Basically, if you’re using Word, Excel, or PowerPoint, Copilot is right there with you, ready to lend a virtual hand.

Copilot, powered by OpenAI tech, is like your personal productivity buddy – generating content, handling the boring repetitive stuff, and throwing out smart suggestions to keep you moving. Sounds great, right? Well, not everyone thought so. Microsoft made Copilot a required addition (along with a price bump), and some users weren’t exactly thrilled.

Still, Microsoft’s gamble seems to be paying off. Around 70% of Fortune 500 companies have jumped on the Copilot train, proving that businesses are all in on AI. Microsoft’s message? They’re doubling down on AI to boost their software and deliver more value to users (even if some need a little convincing).

This whole thing is part of a bigger tech trend – companies are weaving AI into everything to make tools smarter and more intuitive. But it also raises a big question: how do you innovate without annoying your users?

February 2024

February 2024 was a wild one for AI. OpenAI rolled out Sora – a multimodal AI that can whip up complex video scenes straight from text prompts. Yep, you type something out, and boom – photorealistic videos. It’s like sci-fi becoming reality.

Sora builds on OpenAI’s past generative models (think text and images) but takes things up a notch by diving into video creation. This opens doors for all kinds of stuff – from making mind-blowing content and VR experiences to changing how we approach marketing, education, and entertainment.

Of course, with great power comes… well, a little anxiety. People immediately started talking about deepfakes and the potential for misleading content. Can’t blame them – tech this powerful can be a double-edged sword. The call for stricter regulations and ethical AI use got louder.

To their credit, OpenAI didn’t just throw Sora out there and hope for the best. They put guardrails in place, emphasizing the importance of safety and responsible use. OpenAI’s stance? They’re all about pushing boundaries but know that earning public trust is key to keeping this AI train on the right track.

Sora’s launch wasn’t just another AI milestone – it was one of those moments that made you stop and think about where this tech is heading… and how we make sure it’s for the better.

March 2024

In March 2024, the European Parliament officially approved the EU AI Act, marking a significant step toward establishing a comprehensive regulatory framework for artificial intelligence systems. This groundbreaking legislation prioritizes responsible AI deployment, data privacy, and ethical standards, applying to all AI users within the European Union, as well as providers conducting business in the region.

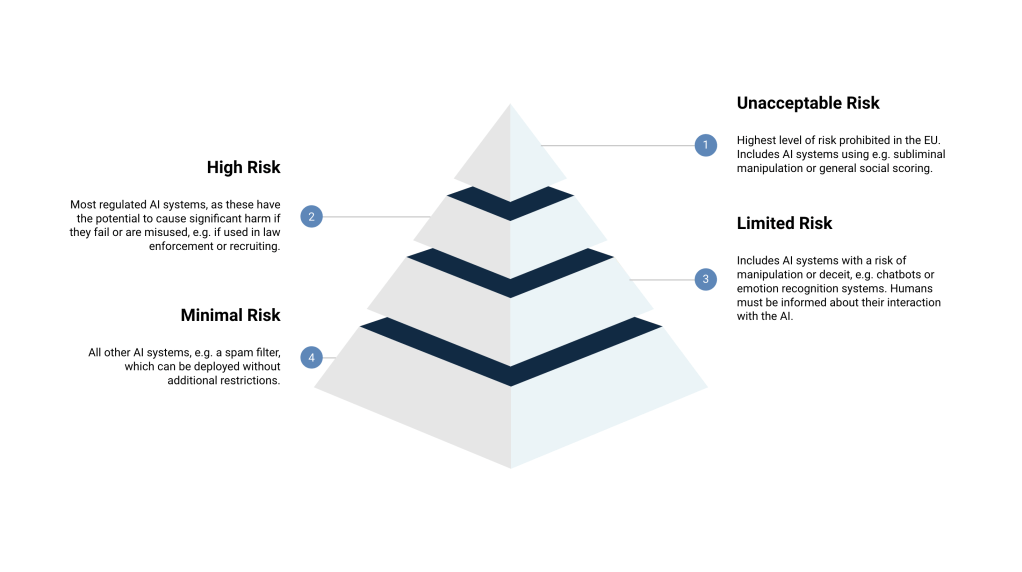

The EU AI Act introduces a tiered classification system, categorizing AI technologies by risk level—Unacceptable, High, Limited, and Minimal—with corresponding obligations for both providers and users. High-risk AI systems, for instance, are subject to stringent requirements, including detailed risk assessments, enhanced transparency measures, and continuous monitoring to ensure alignment with ethical guidelines.

The overarching goal of the legislation is to encourage innovation while protecting fundamental rights, fostering the development of trustworthy AI, and setting a global benchmark for AI governance. Through the implementation of clear, structured regulations, the EU aims to harmonize technological progress with societal interests, ensuring that AI advancements reflect European values and ethical principles.

The approval of the EU AI Act represents a pivotal moment in the global conversation on AI ethics and regulation, underscoring the necessity of robust frameworks to address the evolving challenges and complexities presented by artificial intelligence.

April 2024

In April 2024, Representative Adam Schiff made headlines by introducing the Generative AI Copyright Disclosure Act in the U.S. Congress. The idea behind it? Companies have to come clean about any copyrighted materials they’ve used to train their generative AI models.

Under this bill, firms must send a notice to the Register of Copyrights – listing the identity and URL of each copyrighted work – at least 30 days before launching a new or updated AI model. And it doesn’t just apply to future models; even existing AI systems fall under this rule. Companies that skip out on the disclosure could face fines starting at $100.

The bill came hot on the heels of reports revealing that tech giants like Google and Meta have been quietly using copyrighted content to train their AI models – and not everyone’s happy about it. Creators and industry professionals have been raising concerns about intellectual property rights and whether they’re getting a fair shake.

The Act has some serious backing, too. Big names like the Professional Photographers of America (PPA), SAG-AFTRA, the Writers Guild of America, IATSE, and the RIAA are all on board, pushing for more transparency and stronger protections for creators in the AI space.

This legislation is a big step toward finding the balance between tech innovation and protecting the people behind the content. It’s about making sure AI evolves in a way that respects the rights of creators and follows the rules of intellectual property.

May 2024

In May 2024, the AI Seoul Summit took place in South Korea, co-hosted by the South Korean and British governments. This was a big deal – leaders from the G7 (including the U.S., Canada, France, and Germany) showed up, alongside reps from the UN, OECD, EU, and major tech giants like Tesla, Samsung, OpenAI, Google, Microsoft, Meta, and South Korea’s own Naver.

One of the biggest takeaways from the summit was the adoption of the Seoul Declaration for Safe, Innovative, and Inclusive AI. The declaration pushes for stronger international cooperation on AI governance – making sure different countries’ frameworks can work together. It also calls for AI to be developed in a way that puts people first, involving not just governments but also private companies, universities, and civil groups. The goal? Keep AI innovation ethical and beneficial for society as a whole.

The summit didn’t stop there. Leaders also signed off on the Seoul Ministerial Statement, which highlights the need to improve AI safety, foster innovation, and ensure inclusivity. One interesting focus was the call to develop low-power chips – with AI growing at lightning speed, energy consumption is becoming a real concern.

The AI Seoul Summit marked a crucial moment for global AI governance. By bringing together such a diverse mix of players, it laid the groundwork for shaping policies that can ensure AI evolves in a way that’s safe, cutting-edge, and fair for everyone.

June 2024

June 2024 was a big month for AI, especially at Apple’s Worldwide Developers Conference (WWDC). Apple put the spotlight on Siri, rolling out major upgrades to make the assistant smarter and more intuitive across the Apple ecosystem. The goal? A smoother, more seamless experience for users.

But Apple wasn’t the only one making moves in AI. Mistral AI made headlines by raising a massive $640 million in funding – a clear sign that investors are betting big on AI’s potential to shake up industries.

Meanwhile, Figma jumped on the AI bandwagon too, launching new AI-powered design tools. These updates are all about making life easier for designers, cutting down on repetitive tasks and speeding up the creative process.

All in all, June showcased how quickly AI is evolving and finding its way into products we use every day. From personal assistants to design platforms, AI is becoming more embedded in the tech we rely on – and this feels like just the beginning.

July 2024

In July 2024, OpenAI introduced GPT-4o Mini, a lighter, more compact version of its powerhouse GPT-4o model. It’s part of a growing trend – companies like Microsoft, Google, and Mistral are all rolling out smaller, efficient AI models that pack a punch without eating up massive resources.

GPT-4o Mini is all about speed and affordability. By cutting down on computational demands, it opens the door for businesses of all sizes to jump into AI without breaking the bank. And it’s not just about saving money – less energy consumption means a lighter environmental footprint too. From healthcare to education, industries are already finding ways to put this compact model to work.

But the real magic of GPT-4o Mini is how it levels the playing field. Small and medium businesses can now access the kind of advanced AI that used to be reserved for tech giants with deep pockets. Plus, with all the buzz around sustainable AI, OpenAI’s focus on energy efficiency shows they’re serious about responsible innovation.

GPT-4o Mini isn’t just another model – it’s part of a bigger shift in the AI world. As smaller, more accessible models become the standard, we’re looking at a future where cutting-edge AI is within reach for just about everyone.

August 2024

In August 2024, the European Union’s Artificial Intelligence Act officially became law, marking a major leap forward in global AI regulation. This is the EU making a clear statement – AI needs guardrails, and they’re setting the standard.

So, what’s in the Act? Here’s the breakdown:

- Risk-Based Classification – The EU isn’t treating all AI the same. They’ve grouped systems into four categories:

- Unacceptable risk – Banned. AI that violates rights or manipulates people? Not happening.

- High risk – AI used in areas like healthcare or policing faces tight regulations and constant checks.

- Limited risk – Think AI chatbots or recommendation engines. These need transparency – users must know they’re interacting with AI.

- Minimal risk – No heavy oversight. Your Netflix recommendations are safe.

- General-Purpose AI – Models like GPT-4 or DALL·E? They’ll face extra scrutiny if they’re highly capable. The EU wants to make sure these powerful tools are used responsibly.

- Exemptions – If AI is for military, national security, research, or personal use, the Act doesn’t apply.

- New Oversight Board – The EU is setting up the European Artificial Intelligence Board to help member states stay on the same page and ensure the rules stick.

This isn’t all happening overnight – the rollout will take 6 to 36 months, giving businesses time to adjust. But here’s the kicker: even AI providers outside the EU need to comply if their tech is used in Europe. The EU is essentially setting the tone for AI governance worldwide.

The AI Act is about finding that sweet spot – letting innovation flourish while keeping AI ethical, safe, and trustworthy. This is the EU planting its flag, saying AI can transform the world – but not at the expense of rights and freedoms.

September 2024

In September 2024, OpenAI introduced its “o1” series of large language models (LLMs), showcasing a major step forward in artificial intelligence. These models excel at handling complex tasks, such as coding, mathematics, and solving scientific problems, with remarkable precision and efficiency.

The “o1” models represent a significant leap in AI’s ability to reason and process intricate information, which has far-reaching implications across various industries:

- Technology: Developers can use these models to build more advanced applications, improving user experiences and expanding AI-driven possibilities.

- Education: With enhanced reasoning skills, the models can support personalized learning, providing students with tailored educational content and assistance.

- Healthcare: Their improved problem-solving abilities can assist in diagnostics and treatment planning, potentially leading to better patient outcomes.

OpenAI’s launch of the “o1” series highlights the rapid evolution of AI technology and its growing role in everyday life. As these models gain traction, they are poised to fuel innovation and enhance efficiency across multiple fields.

October 2024

In October 2024, NVIDIA made waves in the AI industry with the release of NVLM 1.0, a series of open-source multimodal large language models. The flagship model, featuring an impressive 72 billion parameters, is fine-tuned for exceptional text-only performance after undergoing multimodal training.

This launch positions NVIDIA as a strong competitor to established models like OpenAI’s GPT-4. The open-source nature of NVLM 1.0 is set to drive innovation and collaboration across the AI community, potentially accelerating progress in AI applications for various fields.

NVIDIA’s introduction of NVLM 1.0 highlights its dedication to advancing AI technology and broadening its applications. By making these models open-source, NVIDIA aims to empower researchers, developers, and organizations to harness cutting-edge AI capabilities, enriching the broader AI ecosystem.

This strategic move not only strengthens NVIDIA’s standing in the AI market but also promotes the democratization of AI technology, enabling a diverse range of stakeholders to engage with and benefit from AI-driven advancements.

November 2024

November 2024 was a landmark month for artificial intelligence (AI), featuring key developments across market achievements, legal disputes, marketing challenges, and technological advancements:

NVIDIA’s Market Achievements

NVIDIA reached new financial milestones, reflecting its growing dominance in AI:

- Dow Jones Industrial Average Inclusion: On November 1, NVIDIA replaced Intel in the Dow Jones Industrial Average, solidifying its influence in the tech sector.

- Blackwell Chip Demand: By November, reports revealed that NVIDIA’s entire 2025 production of Blackwell chips was sold out, underscoring the immense demand for its AI hardware.

Legal Challenges in AI Content Usage

The month also brought legal scrutiny to AI practices:

- ANI vs. OpenAI: Asian News International (ANI) filed a lawsuit against OpenAI, claiming unauthorized use of its content for AI model training and fabrication of news stories attributed to ANI. This Delhi High Court case raises critical issues around AI, copyright, and content rights.

AI in Marketing and Consumer Perception

AI’s role in marketing faced criticism:

- Coca-Cola’s AI-Generated Ad: Coca-Cola launched a holiday advertisement created using AI, mirroring a 1995 commercial. Despite its innovative approach, the ad was criticized for feeling “cold and ineffective,” lacking the warmth and authenticity of traditional campaigns.

Advancements in AI Research

Notable progress was made in AI technology:

- Google’s AI Innovations: Google hosted the inaugural AI for Science Forum, released AlphaFold 3’s model code for academic use, and introduced a flood forecasting initiative powered by AI.

- Gemini Model’s Success: Google’s Gemini-Exp-1114 model climbed to the top of AI chatbot rankings, tying with OpenAI’s GPT-4o and excelling in mathematics, vision, and reasoning tasks.

These highlights from November 2024 reflect the ever-evolving landscape of AI, showcasing its rapid growth, ongoing challenges, and significant breakthroughs shaping the industry.

December 2024

December 2024 brought several exciting developments in artificial intelligence (AI), demonstrating its expanding influence across diverse industries:

High-Flyer’s Breakthrough

- DeepSeek V3: Chinese AI leader High-Flyer introduced DeepSeek V3, an open-source large language model with an impressive 671 billion parameters. Trained on 14.8 trillion tokens, it rivals top models like GPT-4o and Claude 3.5 Sonnet, outperforming Llama 3.1 and Qwen 2.5.

AI in Consumer Products

- Mondelez International’s Innovations: Mondelez International, the company behind Oreo, leveraged AI to accelerate snack product development. By employing machine learning, the company cut development time by up to fivefold, launching 70 new products, including Gluten Free Golden Oreos.

Regulatory Progress

- New York State’s AI Monitoring Law: Governor Kathy Hochul enacted legislation requiring state agencies to review and report on their AI software usage. The law mandates algorithm assessments and prohibits automated decisions on unemployment benefits or child care assistance without human oversight.

AI in Sports Entertainment

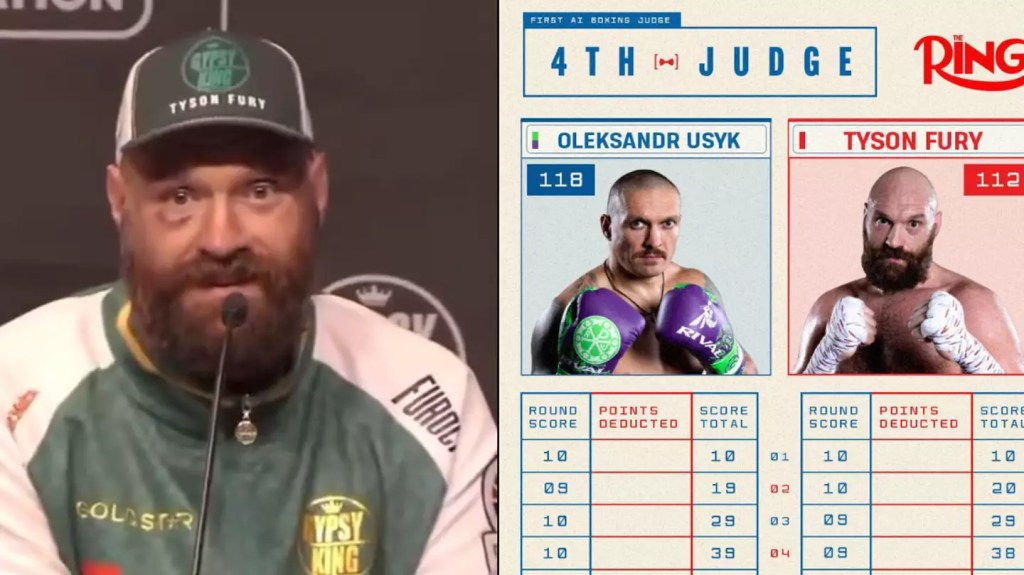

- Virtual Ring Girls and AI Judges: Boxing embraced AI innovation with the concept of AI-generated ring girls, allowing fan interaction with virtual personas. This follows the use of an AI judge in the Tyson Fury vs. Oleksandr Usyk rematch. All three judges scored the match 116-112 in favor of Usyk, while the AI gave him a slightly wider margin of 118-112, awarding him the third, fourth, and sixth through 11th rounds.

A Game-Changing Year for AI

2024 was packed with breakthroughs and milestones, highlighting AI’s incredible potential to transform industries and tackle some of society’s biggest challenges. Here at OnlineFuss, we’re excited to see what’s next—innovations that push boundaries and ethical advancements that drive progress while making life better and more productive for everyone.

Leave a comment